Wikilogs

PkgDiff 1.6 - Added compatibility check

PkgDiff tool is developed to visualize changes in files and attributes of all types of Linux software packages and is intended to be used by maintainers and QA-engineers in order to verify changes and prevent unintentional changes, which can break other packages in the repository. One of the most significant elements in the structure of the Linux distribution are system libraries. Average Linux distribution contains several thousand libraries and they have a huge number of internal dependencies. For this reason, an update of a library can break the build or behavior of other libraries and eventually lead to malfunction of user applications.

In the new version of the PkgDiff tool (1.6) we have added the ability to check the compatibility of changes in libraries. This was made possible due to a new ABI Dumper tool, which can extract information about the library ABI from the debug-files, which can then be analyzed by the ABI Compliance Checker tool. To check the compatibility of two packages A and B (old and new versions of a package), the user should pass appropriate debug-packages to the tool and run it with the additional -details option:

pkgdiff -old A-debuginfo.rpm -new B-debuginfo.rpm -details

We have added the new ABI Status section to the output report, which shows the backward compatibility level of the library ABI. To view detailed compatibility reports one can find the Debug Info Files table in the report and follow the links in the Detailed Report column.

ABI Dumper - A tool to dump ABI from DWARF debug-info of an ELF-object

When you compile ELF-object, such as shared library or kernel module, with the additional -g option, the debug information is inserted in this object. This information is typically used by the standard debugger gdb to provide the user with additional features when debugging the program. One can read this debug-info with the help of -debug-dump option of the readelf or eu-readelf utility (from elfutils package).

An important part of any ELF-object is its binary interface (ABI), provided for its client applications to use. In essence, it is a representation of the object's API on the binary level (after compilation). When you update the object in the distribution, it is important to maintain ABI backwards-compatible, otherwise it may cause a malfunction or crash of applications. Changes in the ABI are usually caused by corresponding changes in the API of an object or by changes in the configuration and compilation options. To track changes in the ABI of an object we use the ABI Compliance Checker tool. But until now it could only analyze shared libraries by extracting information from header files.

In order to expand and simplify usage of the ABI Compliance Checker tool, we use ABI Dumper tool for extracting ABI information from the debug-info of an object. Now, with the help of this tool one can track changes not only in the ABI of libraries, but also, for example, in the ABI of kernel modules. A typical use case is to create ABI dumps for the old and the new version of an object:

abi-dumper libtest.so.0 -o ABIv0.dump abi-dumper libtest.so.1 -o ABIv1.dump

and then compare them:

abi-compliance-checker -l libtest -old ABIv0.dump -new ABIv1.dump

Unfortunately, this approach has its drawbacks. Perhaps the main drawback is the inability to perform some compatibility checks. For example, there is no possibility to check for changes in the values of the constants (defines as well as const global data), since their values are inlined at compile time, and not presented in the debug information of the binary ELF-object. In general, there can be checked about 98% of all compatibility rules. Another disadvantage is the long time required to analyze large objects bigger than 50 mb. But one can use the dwz utility to compress input debug-info.

Packaging-tools - useful tools for maintainers

In ROSA is available packaging-tools — set of scripts for maintainers, which was firstly developed in Ark Linux.

Set includes spec-files generator for arbitrary packages — vs — which creates a blank of spec-file and opens it in vim (or in editor, which was specified in variables EDITOR or VISUAL). Also are available special generators for spec-files of packages specific types:

- vl

- for libraries

- vp

- for modules Perl

- vj

- for Java-packages

These generators create blacks of spec-files, which take into account specific of every concrete type of package (for example, nessesary subpackages are created for libraries).

Another useful script is e, a simple wrapper for gendiff. If you want to prepare patch for some package, you need to unzip archive with source code and edit nessesary files with e. In fact, this script will call an outside editor (specified in variables EDITOR or VISUAL; default is vim), but before it happend, it will save source file with suffix rosa2012.1~ (suffix can be edited with option -s). As soon as you have finished, cause gendiff to create patch.

This is an example, how you can prepare patch for file test.c for source code someapp-1.2.3 with editor geany

$ tar xzvf someapp-1.2.3.tar.xz $ cd someapp-1.2.3 $ export EDITOR=geany $ e test.c $ cd .. $ gendiff someapp-1.2.3 .rosa2012.1~ >my.patch

This way seems to be a bit complicated, but it is really suitable, if you need to prepare small patch for large source code file.

DistDiff - visualizing changes in Linux Distros

It isn't really easy to release stable updates for Linux distribution. You have to check, that all applications will work properly after update. They can be divided into two big groups: basic (from distribution) and personal (installed by user from other sources). Basic apps could be easily checked by installing them into updated distribution. Then they are checked manually. But we don't know anything about applications from second group. So, you have to not only check efficiency of them, but have to watch list of all changes in packages.

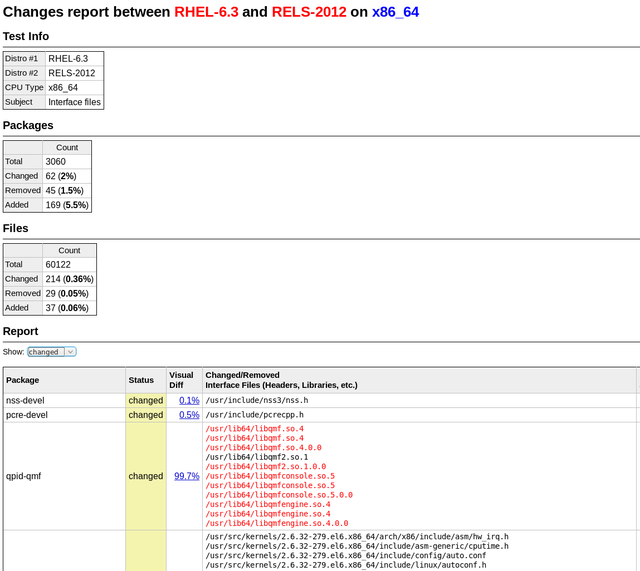

Compatibility of changes in system libraries can be checked with tool ABI Compliance Checker. To check changes in other packages we have developed tool DistDiff. It helps visualize changes in all packages of distribution and quickly look through them for violations of the compatibility. You have to input into tool only two directories: with old and new packages. In default mode tool checks changes in interface files (libraries, modules, scripts and others), which can potentially affect on compatibility. It can also check all files with option "-all-files".

It is based on tool we have developed earlier PkgDiff. It was also developed to visualize and compare changes in packages.

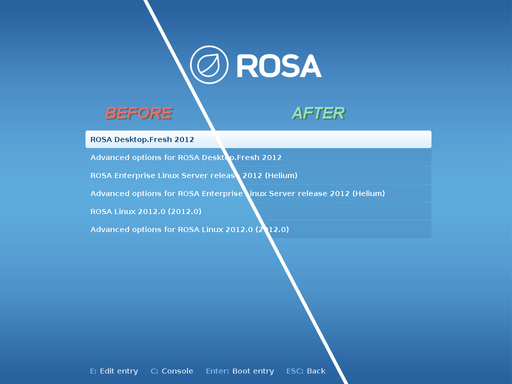

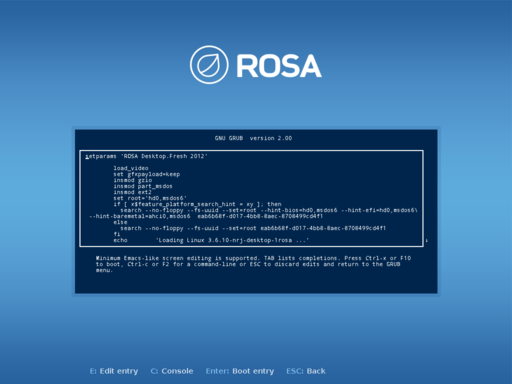

GRUB2 - memory leakage and lot bugs in progress bars fixed

Our developers tends to increase functionality and upgrade any program they deal with. Thats why they have created 5 more updates for the GRUB2 bootloader. Patches were sent to the GRUB2 upstream and accepted.

MagOS Linux based on ROSA Marathon

It is nice to have ROSA on your own machine, but sometimes many of us meet the situation when it is necessary to work on other’s computers where you can’t change the operating system. In many cases LiveCD will help, and ROSA provides possibility to boot into Live mode from flash or CD/DVD. However, though been very useful in many aspects, the Live mode suffers at least from the following issues:

- you can save results of your work on a hard drive of computer, but it is not easy to save them of the flash drive from which you have booted;

- there is no possibility ti change system settings, save them and use during the next boot;

- finally, to burn ISO image on flash and boot into the Live mode, you should preliminary move all files from the flash to somewhere. Even if you have a flash with dozens of Gb of free space and ROSA iso image only needs 1.5 Gb.

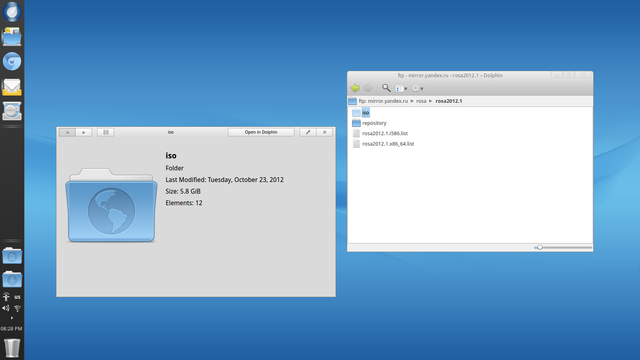

All these disadvantage are absent in MagOS Linux. Traditionally, MagOS was based on Mandriva, and some time ago ROSA-based variation was introduced.

Distribution builds are available here: http://magos.sibsau.ru/repository/dist/. The build based on ROSA Marathon has 2012lts suffix (at the moment of writing this paper, the latest build was MagOS_2012lts_20130228.tar.gz).

The build is actually a tarball with three folders — boot, MagOS, MagOS-Data. To install MagOS on your flash drive, you should unpack the tarball and place these three folders in the root of your flash. There is no need to remove existing data from flash, but remember that the system needs about 2Gb of space.

Note that it is necessary to mount flash without 'noexec' option., to be able to launch scripts directly from it (this is required to make a flash with MagOS bootable). ROSA uses 'noexec' option by default, so you will have to mount your flash stick manually from the command line.

In order to do this, launch console with root privileges (e.g., in KDE press Alt-F2 and «kdesu konsole») and execute the following command:

mount -o remount,exec /dev/sdx /mount_point

(here /dev/sdbx is a device file corresponding to your flash drive, /mount_point is a directory where the flash is mounted).

Or just remount you flash stick:

mount -o remount,exec /dev/sdb1 /mount_point

Now copy boot, MagOS, MagOS-Data and folders to /mount_point, go to /mount_point/boot/syslinux folder and launch install.lin.

You will be asked if you are sure to make the device bootable. To agree, press enter.

Now leave the /mount_point folder and unmount the flash by 'umount /mount_point' command.

Now reboot your machine and boot from flash. You should see MagOS boot selection menu with several boot options.

The system is based on ROSA, but by default a standard KDE is used, without SimpleWelcome and RocketBar. Besides KDE, one can load Gnome or LXDE; to do this, logout and choose appropriate session type in the menu at the bottom of the screen. Remember that default user/password are user/magos.

MagOs Linux can be installed on flash not only in Linux, but in Windows, as well. It is also possible to install the system on a usual machine — either as a standalone system or inside existing Windows partition.

More details can be found at MagOS wiki (http://www.magos-linux.ru/dwiki/doku.php), but unfortunately for our foreign users, all documentation is in Russian. However, MagOS Linux is very easy to use, in most cases you won’t need any additional documentation at all. And for getting started, the documentation on wiki should be enough — though the text is in Russian, the commands to be executed should be clear for any user. Also note that the thing you would likely want to do after the boot first is to change locale of the system, which is set to Russian by default. To do this, just go to KDE CC, find locale settings and choose English (this option is available by default; to choose other languages, you will have to install appropriate localization packages — at least kde-l10n ones).

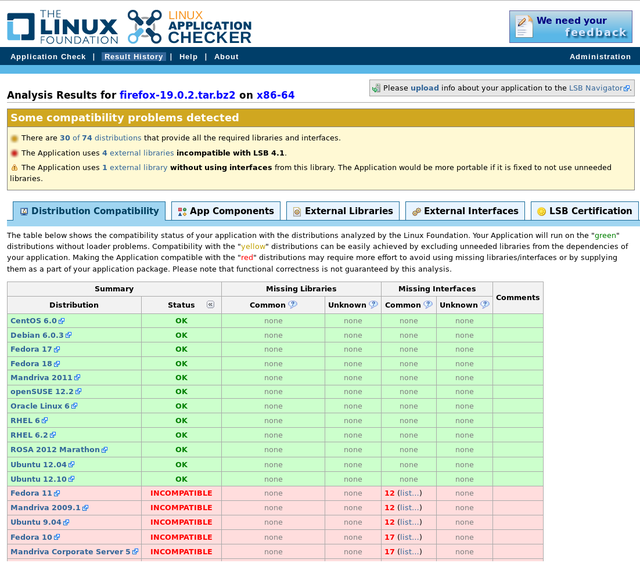

ROSA Marathon is included in the Linux Application Checker knowledge base

The Linux Foundation consortium has announced an updated version (4.1.8) of the Linux Application Checker (AppChecker) — a tool aimed to analyze compatibility of applications with different Linux distributions and check their compliance with Linux Standard Base (LSB). Currently AppChecker database for the x86 platform contains data about 84 distributions, now with ROSA 2012 Marathon among them. We are going to continue our collaboration with Linux Foundation engineers and provide them with necessary information about ROSA releases.

AppChecker analyzes compatibility of application with particular distribution by comparing a set of shared libraries and binary symbols required by application with sets of libraries and symbols provided by the system. Satisfaction of such requirements is a necessary condition of successful application launching in the operating system — if some library or symbol is absent, then application cannot be launched in the distribution. The list of libraries and binary symbols required for the application is obtained by analyzing application binary executables (in ELF format) and shared libraries. To be sure, only those requirements are taken into account that are not satisfied by libraries of the application itself. AppChecker actually emulates work of the system loader during application launch; if some required libraries or symbols are missing in the system, the application will simply fail to start (or will silently fail during its work, if lazy binding is used).

One should note that AppChecker contains data only about limited set of widespread libraries, not about all those that exist in distribution repositories. More precisely, it is guaranteed that the information is correct for libraries from the 'approved' list (http://linuxbase.org/navigator/browse/rawlib.php?cmd=display-approved) which currently contains almost 1,500 entries, while repositories of most distributions (in partiular, ROSA) contains several thousands of libraries. If application requires library not included in the approved list, AppChecker will honestly report that it can’t say if this library is present in certain distributions or not.

As an example usage, one can see that the Firefox build downloaded from http://mozilla.org (of the version 19.0.2 at the moment of writing this article) cannot be used in old systems such as Fedora 10 or Ubuntu 9.04.

ROSA tools in upstream

While creating ROSA, we not only develop and adapt different packages for our distribution, but also design new tools for other developers.

One of such tools is API Sanity Checker, which is aimed to automatically generate tests for C++/C libraries.

For its work, the tool requires only header files with declarations of library functions (and all necessary data types). Using this information, API Sanity Checker generates tests that calls every function from the library with proper arguments. Usually these automatically generated tests are used as a template for developing more complex sets (with enumeration of different values of parameters, their combinations, etc).

The tool is absolutely free (the source code can be found here) and can be used by everybody. For example, not so far API Sanity Checker has been integrated in the development cycle of the GammaLib library. As a result of the tool's work, 11 errors were found and fixed in that library (https://cta-jenkins.irap.omp.eu/job/gammalib-sanity/).

We recommend all upstream developers of different C/C++ libraries to follow this successful example. The resources to create test set is minimal, but number of errors found can be really great.

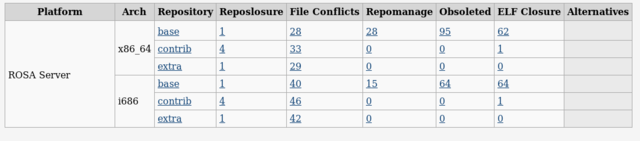

Monitoring of RELS Repositories

As many of you likely know, ROSA repositories are subjected to constant monitoring aimed to detect potential problems in the package base. For a long time, regularly updated results of such monitoring for ROSA Desktop series have been publicly available at http://fba.rosalinux.ru (by the way, we have recently added one more kind of reports — «File Conflicts» — that reflects packages containing the same files but not explicitly marked as conflicting using the Conflicts tag).

But Desktop is not the only direction of ROSA development; another important member of ROSA OS family is ROSA Enterprise Linux Server (RELS). Currently the same kinds of reports are available for RLES and ROSA Desktop except the Alternatives analysis (which is currently provided for ROSA Desktop only, though we plan to add it for RELS in the near future, as well).

As one can see from the report table, RELS package base is in a really good shape — typical numbers for such reports are dozens or even hundreds of problems, while the number of potentially problematic packages in RELS is close to zero.

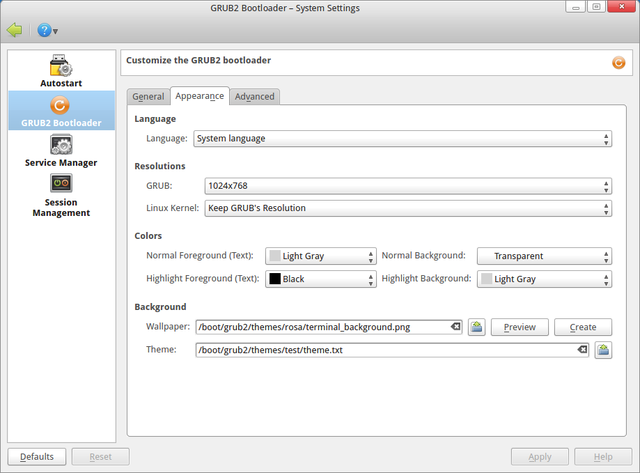

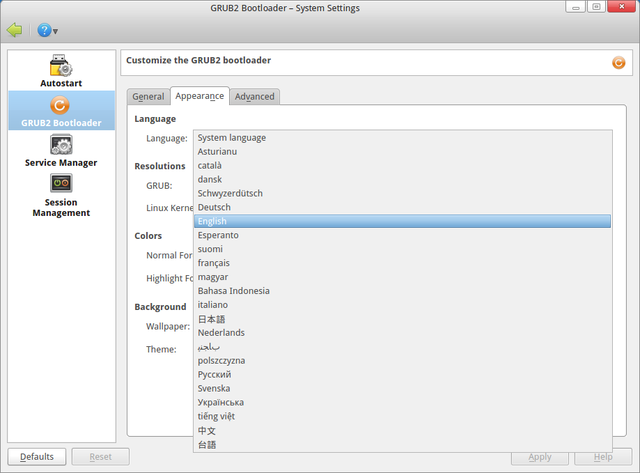

kcm-grub2 contribution — selecting bootloaders language

Our developers have created the update for the GRUB2 manager (kcm-grub2). New option for selecting bootloader's language has been added. It is useful in cases when user needs to set language of the bootloader not equal to system's language.

"System language" can be selected and then behavior of the GRUB2 manager as well as behavior of the script for renewing bootloader's configuration (update-grub2) will be usual: GRUB2 language will be the same as system's language.

Moreover, specific language can be selected, e.g. "English" or "Русский". Then as result of configuration update (by saving new configuration using GRUB2 manager or calling script update-grub2) we will see GRUB2 menu in selected language.

The patch, which adds option to choose bootloader's language, was sent to the upstream and will be embedded in next version of the program.

Command-not-found

You can often see a message «bash: foo: command not found». And you surely want to know why. For example, necessary package is not installed or there is just a misprint. Probably many users become confused after this:

$ rpmbuild bash: rpmbuild: command not found $ sudo urpmi rpmbuild No package named rpmbuild The following packages contain rpmbuild: java-rpmbuild, rpmbuildupdate You should use "-a" to use all of them

For such cases “command-not-found” tool has been created for ROSA! There are similar tools in other distributions, but there was no such tool for ROSA/Mandriva up to now. But from now on, all you need is to install command-not-found package and open new terminal. Try to type something weird:

$ foo No command 'foo' found, did you mean: Command 'fio' from package 'fio' (contrib) Command 'fop' from package 'fop' (main, installed) Command 'for' from package 'execline' (contrib) Command 'zoo' from package 'zoo' (restricted)

Perfect! I just wanted to call “zoo”, I made a mistake. (By the way, notice that “fop” package is already installed, but now we don’t need it)

$ zoo Command 'zoo' can be found in: package 'zoo' (restricted) You can install it by typing: urpmi zoo Do you want to install it? (y/N)

All you need is to type “y”. You don’t want to receive an offer to install a package? Set environment variable “COMMAND_NOT_FOUND_TURN_OFF_INSTALL_PROMPT=1”, and there will be no stupid questions.

It should be mentioned that when you run the program without the help of TTY, it won’t execute any check, it will just write “command not found” like bash itself. Also whatever command-not-found writes, it will exit with code 127, as bash does in such cases.

One more command-not-found feature is analysis of installed packages. If you type

$ ifconfig Command 'ifconfig' can be found in: package 'net-tools' (main, installed) File /sbin/ifconfig exists! Check your PATH variable, or call it using an absolute path.

Also “cnf” utility presents in command-not-found. It allows doing everything that is described above (in fact, it is executed every time bash fails to run command). In other words, "cnf foo" will give you the same output, as when you type “foo” in console. You can use “cnf” to learn from which package an installed program came.

Probably you have already installed command-not-found. Did you notice that there was another one package installed — command-not-found-data. This package contains data base (JSON format file) from which information is taken while cnf is working. As repositories are always changing, it is necessary to update information of this base from time to time. That is why this package is rebuilt with actual data once a week and comes to you with other updates.

We hope that your work with console will become more pleasant :)

Automated testing system

After several months of development, the initial version of the automated testing system for ROSA Linux distributions («ROSA Autotest») is up and running.

For the present, the system checks ROSA Desktop Fresh 2012 releases. It operates as follows:

- Checks daily, whether new ISO images for this distro are available at ABF and downloads these images to a local machine for testing.

- Checks whether the OS can be started in live mode using these ISO images on the virtual machines.

- Checks whether the OS can be installed to the virtual machines from these ISO images.

- Enables the default software sources on the installed systems and performs a software update there.

- Uses Autotest (http://autotest.github.com/) to run several tests on the installed systems:

- a clock manipulation test («hwclock»);

- a stress test for filesystem-related components of the kernel («dbench»);

- simple tests for IPv6-related software («ipv6connect»);

- etc.

More tests are to be added to the system in the future.

The results of the automated testing are available at FBA: http://fba.rosalinux.ru/autotest/.

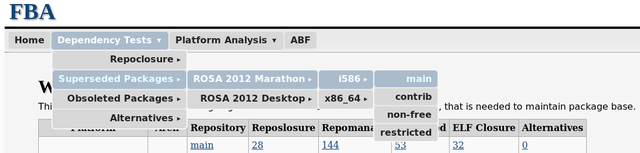

FBA — New look, new sections and other reports enhancements

During the last months we have added a lot of reports to our site http://fba.rosalinux.ru which is used to monitor ROSA repositories. It became very simple to get confused among all that reports, so we reorganized the main menu:

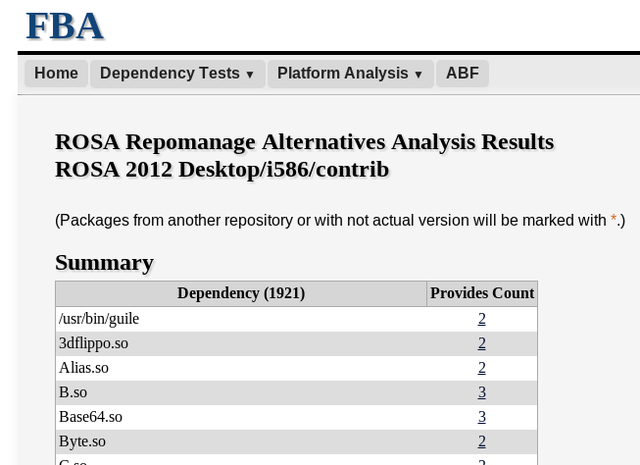

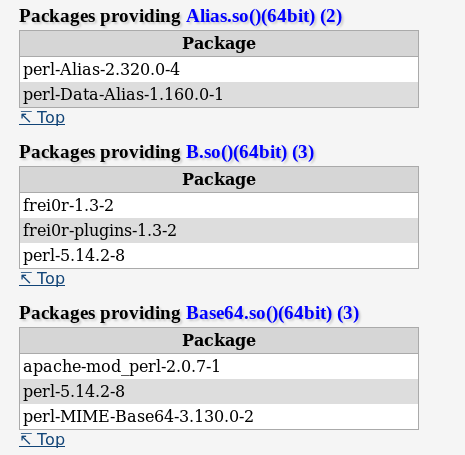

are constantly working on adding new sections, and in future we are planning to add Rpmlint reports, reports about file conflicts and cyclic dependencies. You will be able to see beta versions of such statistics in FBA in the nearest future. And now let us present one more new report kind: statistics about «alternatives» — dependencies that can be satisfied by several packages at the same time.There are different reasons for ROSA repositories to have a lot of packages with the same Provides records. Some of such cases are valid, but sometimes such alternatives are excessive and just confuse users who receive a number of questions about packages they would like to install to satisfy one or another dependency. Unfortunately, in the past we didn’t pay much attention to a great amount of alternatives. As a result, not all packages with identical Provides records are really alternatives from functional point of view. Sometimes this comes from historical roots, sometimes it is a result of incorrect work of dependences generator, and sometimes such cases appear due to the maintainers mistakes made through lack of attention when the forming set of dependences. As a result, in case of wrong choice of alternative, installed applications don’t work and lead to the errors like this.

So far dubious and incorrect alternatives were detected and corrected occasionally — when maintainers faced conflicts personally or when we received corresponding requests from user. But now we have added necessary analytical means to FBA (thanks to urpm-repograph which already provides all required functionality), so now ROSA repositories are subjected to constant monitoring of packages with identical Provides.

Results of monitoring can be observed there — http://fba.rosalinux.ru/test/repomanage_alternatives/.

If you think that some alternatives should be removed — feel free to send your suggestions to our developers :) Of course, some duplications are legitimate. In future we will separate such cases not to treat them as errors.

Besides of adding new reports, we are working on improving the existing ones. Now at various pages you can get not only the name of the package which contains errors, but also get a list of packages that depend on the broken one. It is really actual for repository closure analysis: if some package cannot be installed because of unsatisfied dependences, then dependent packages also cannot be installed. That’s why it is important to estimate the number of packages that will be «lost» for user as a result of dependency breakage. For example, in repoclosure reports you can jump to the «Broken Packages» table. For every broken package, it provides the number of packages that depend on it. Click on that number to get a list of such packages. A «question» mark instead of a number designates that there is a newer version of this package in repository. So no package depends on this particular version.

Finally, there is one more useful improvement: now the names of SRPM packages in repoclosure reports are links to the appropriate projects in ABF. So you can go from the report page straight to the page of the project in ABF (moreover, necessary Git-repository branch will chosen automatically).

Another contribution to GRUB upstream

Implementing the concept developed by our designer we have included new functionality for Grub2 visual theme. Now it is possible to decorate inactive entries of the boot menu (particularly, item_pixmap_style). As a result, they now have dark semitransparent background with rounded corners. These changes open new opportunities for designing bootloader themes.

Moreover, we have fixed a bug in the grub-mkfont tool which converts fonts into format used by the bootloader. It was impossible to specify one of the font metrics (ascent), therefore trying to display some specific symbols from local language (e.g., Cyrillic) in the console, all symbols were displayed incorrectly. After fixing this issue, we managed to solve problems with "artifacts", increased line spacing and incompletely drawn symbols.

Both updates were send to the upstream and accepted.

Console fonts in ROSA

In spite of the fact that ROSA’s main features are various graphic stuff and beautiful full-functional GUI applications, there is also a console in our distribution. This application has its own audience: for example developers who deal with system stuff or users who have rich experience in Linux and who use console utilities from time to time.

We think that the console should be beautiful, convenient and easy-to-use, like everything in ROSA. One of the main criteria of usability is a font used in console. The choice of font is generally the matter of taste and, as it was found out, «native» KDE fonts don’t fit to everyone.

In particular, many users think that the font which was used in a wide range of distributions (for example, openSUSE 10.x) for KDE3 console was the most suitable one. The fonts were changed with migration to KDE4, but till now there are people who like the old one. If you are among them, we have a good news for you — now this font is available in our distributions. All you need is to install fonts-bitmap-misc-console package, and then the new Console font will be available in Konsole settings (with the only fixed size 12).

Another popular font is Source Code Pro font, developed by Adobe engineers (http://blogs.adobe.com/typblography/2012/09/source-code-pro.html). This font is also available in our repositories (SourceCodePro package). Though this font doesn’t support Cyrillic alphabet and many other national sets of symbols, programmers who work a lot with source code of various applications may like it.

KLook 2.0: Better than ever

We published on the our build system - ABF a new version of KLook with the many improvements. Most of these improvement have made in KLook architecture, so you will not see many visible changes, but you will felt them.

A first and main improvement: we added support of remote file systems! Thus, you can use KLook with FTP, SMB, WebDAV and other directories and files that can be opened in the Dolphin file manager.

If the file that you will try to open in KLook will be a folder or an archive - then KLook just show information window about it, otherwise KLook will try to open this file and show it to you.

A second improvement: we moved handling of previews in the "Gallery Mode" from the QML side to C++ side, so the quality of previews has been significantly improved.

For people, who still not use ROSA: a fly in the ointment: it seems that we'll can't push KLook to the official KDE repositories. A new maintainer of the Dolphin file manager refuses all our attempts to pass our code into KDE upstream. So, it can be one more item "+" to use ROSA. :)

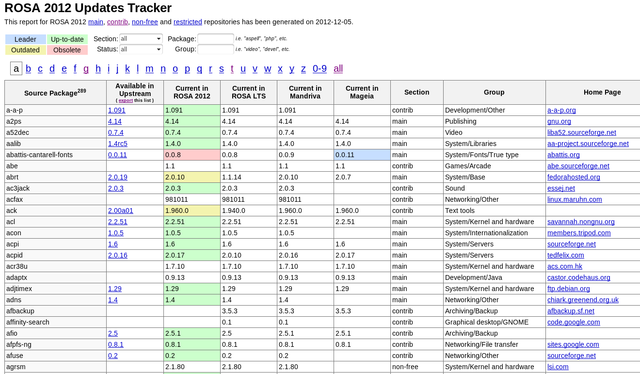

Updates builder — automatically detect and build package updates in ABF

In December, we have introduced an alpha version of a new tool which we hope many of you will find useful — updates_builder.

In short, the tool performs the following actions:

- Checks for available upstream updates

- If update is found, the tool downloads appropriate tarball, pushes it to ABF file store, updates spec and .abf.yml in the appropriate project of the import group and initiates a build for rosa2012.1.

So using a single one-line command you can check if there is an update for some package and try to build it in ABF, if any.

Don’t worry, all changes are performed in a separate branch — 'auto_update'. The build is performed for 'import_personal' repository.

More info and examples:

The tool is currently available in akirilenko_personal repository.

To get information about available updates, the tool uses upstream-tracker.org service (http://upstream-tracker.org/updates/rosa/2012/):

We believe that the tool is going to be very useful — at least it allows to almost automatically check how much efforts will it take to update to a newer version of some package.

Surely, some technical aspects can be a topic for discussion, but after several experiments we can say that the tool works quite well — we’ve already updated some packages in ROSA 2012 Desktop using it.

And using such a tool, we can implement many nice features in future — e.g., setup automated tracking/building of newer package versions somewhere on our servers. So you will only have to say "hey, I wanna to monitor that package" and after that you will receive not only notifications about new upstream releases, but also results of first attempt to build that new releases in ABF.

ABF API. New changes, challenges and plans

ABF API is evolving, and here are we present recent modifications.

First, we have added a new field with URL to project Git repository in the project block in all API calls where the project block exist:

"git_url": "path to project git"

You can use it if you need. This is a backward compatible change should not affect any existing tools that working with API.

Next, we have added some new API calls:

- Search API - http://abf-doc.rosalinux.ru/v1/search/

- Get build lists for a project - http://abf-doc.rosalinux.ru/v1/buildlists/#list-build-lists-for-a-project

... and currently we are working on Maintainers API (API to work with Maintainers DB) which will be available in the near future - http://abf-doc.rosalinux.ru/v1/maintainers/

Finally, we have added information about specific conditions for a number of calls and implemented informative error messages for the following cases:

- build cancellation (http://abf-doc.rosalinux.ru/v1/buildlists/#cancel-build-list)

- build publishing (http://abf-doc.rosalinux.ru/v1/buildlists/#publish-build-list)

- publishing rejection (http://abf-doc.rosalinux.ru/v1/buildlists/#reject-publish-build-list)

Please check these links and correct your software, if it needs.

Stay with us!

ABF console client

As it was written before, the new way of interaction with ABF have recently appeared: console client. ABF developers spent much effort to develop API in October. It made it possible to add lots of features to console client, but it was decided to improve the basic functionality first. So, what is ABF-Console-Client needed for? Let’s take a look at package life-cycle. User wants to create (or get existing) project, modify it, build and publish. Let’s start in order.

- Clone git repository

- project name is assumed to be known. User have to open ABF web page, search for a project, go to a project page, copy git URL, clone repository using git. However, it can be done easier. Just execute «abf get PROJECT», where PROJECT is a project name with owner (owner/proj_name). Owner can be omitted, default group will be used.

- Project modification

- When you’ve got files in your project modified, you can execute «abf put -m MSG». It will execute «git add --all», «git commit -m MSG», «git push». Additionally, all the source archives have to be uploaded to File-Store and .abf.yml file have to modified. It’s a routine work, especially when you have to build a hundred projects a day. Console client will do it for you automatically.

- Create a build-task

- To do it, you have to open an ABF web page, click some check-boxes and click "Start build". It looks like a few work to do. But if you have to build hundreds packages a day, you can do it much faster using console client, especially if you write your own script executing console client.

- Building process

- To check the current building status, you don’t have to check web page anymore. Just execute «abf buildstatus ID» to get a short summary. ID can be omitted and the status for the last built project. It will be described later in detail.

- Publication

- «abf publish ID» will do it easily.

As you can see, console client makes maintainer’s work easier a bit. That’s why we work hard to improve the tool and to satisfy maintainers' needs.

The list of features added in the last version:

- ABF client caches the locations of git-repositories in your system when it accesses them. If you have already got lots of repositories, you can cache their locations at one go. Just run «abf locate update-recursive -d PATH», where PATH is a directory with repositories. For what purpose should one know the location of every repository? For example, user can now execute «abfcd PROJECT». You can just learn the location of any project by executing «abf locate -p PROJECT». Console client will be able to move files not only among the project branches, but also among projects.

- First version of console client had only got the basic version of bash autocomplete script. Now it autocompletes almost everything: option names, git branches, build and save-to repositories for «abf build» (the latter requires a project name to be specified, so you have to type the --project/-p before --save-to-repository/-s). As a result, it’s much easier to work with console client, because you don’t have to type the long repository name or remember the list of possible variants. Sometimes autocompletion works long (about 1 second), but the results are getting cached and the process is speeding up.

- The new command, «abf clean» appeared. It scans the spec and .abf.yml files and current directory and shows problems found. If some source or patch file can not be found (or resolved via URL or .abf.yml) — it prints an error message. It also prints warnings, for example, if a file is specified in .abf.yml and in spec file. The test is automatically executed while creating a build task from the current directory and prevents a build task from being sent. This check can be turned off by option --skip-spec-check.

- When user creates a new build task, its ID will be stored and associated with a project. «abf buildstatus» will print the summary for the last build task. If project was specified (or you are in a project directory) — it will print information about the last build task for this project.

- About work with API. The number of unnecessary API calls have been significantly decreased. The fact is that when you, for example, requests information about repository, API returns some particular information about platform too. The older version could only use the ID of this platform and downloaded the full information about platform by one more API call. Now this information is stored in platform object that is marked as 'stub'. When you try to access a field that is not loaded but have to present in this class — a new API call will be made and information will be retrieved. As a result, the first console client call doesn’t results in dozens of API calls anymore.

- One more interesting feature — work with (HTTP ETag) to cache the results of API calls with autovalidation. It results in speeding the process up without a risk of working with the outdated data. ABF is not well configured now to fully support this technology, but it’s going to process requests for cached data fast. It will also stop increasing a counter of API calls (every IP is limited to 500 API calls per hour).

As you can see, the ABF console client development continues. All your feedback (either positive or negative), suggestions and regards are welcome.

ABF: Basic API, inline comments

Implementation of two new features has been presented by ABF team in October: ABF API and inline comments.

Now you can manage all basic operations in ABF using ABF API, excluding database maintaining db and product build (ISO creation). The documentation can be found at http://abf-doc.rosalinux.ru/.

ABF API provides about 60 API calls.

Note that API is in a beta state. Breaking changes may occur.

The main product where ABF API is currently being used is ABF console client.

The next feature is inline comments: now ABF allows you to either comment on each commit as a whole, or click on any line and start a conversation on that particular line. Now you can discuss more specifically spec files, patches or new code. Try it!