Wikilogs

Hardware trends

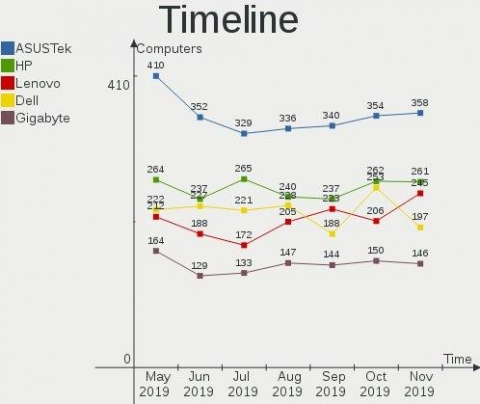

Today I'm glad to open the next major update of the hardware database — a live statistical report on Linux-powered hardware configurations of our users: https://linux-hardware.org/?view=trends

The report helps to answer questions like "How popular are 32-bit systems?", "How fast is SSD market share growing?", "Which hard drives are less reliable?", "How many computers use old CPU microcode?", "How good is device drivers support?", etc.

In addition to ROSA distribution, other Linux distributions also participated in the study. Most active participants currently are Ubuntu, Mint, Endless, Fedora, Arch, Manjaro, Debian, Zorin, openSUSE, KDE neon, Clear Linux and Gentoo. For top distributions in this list one can find most accurate results.

All charts and table rows are clickable — you can see the details of particular computers counted in statistics. I.e. in addition to statistics and forecasting, the report can be used as a powerful search engine.

The static version of the report for the current month is also available in the Github repository.

The report is built on the basis of user probes with the help of hw-probe (for other distributions: AppImage, Snap, Flatpak and Docker):

hw-probe -all -upload

Please participate!

Probes of the current month are accumulated and appear in the statistics on the first day of the next month. Please let us know if you have ideas for new statistical reports that are not yet implemented in the study.

Search for drivers

There are often cases when a couple of devices does not work properly in your computer out of the box under Linux. The reason for this may be too new hardware (not yet implemented in the kernel), the absence of necessary Linux drivers (not provided by hardware vendors), too obsolete hardware, incompatible devices (e.g. storage controller and drive model, etc.) or a defect. According to data from the Linux-Hardware.org, at least 10% of Linux users encounter such problems. According to our statistics, the most problematic devices are:

- WiFi cards

- Bluetooth cards

- Card readers

- Fingerprint readers

- Smart card readers

- Printers

- Scanners

- Modems

- Graphics cards

- Webcams

- DVB cards

- Multimedia controllers

If a device does not work, then this does not mean that it cannot be configured properly. Sometimes you can find a more suitable kernel or a third-party driver for a device on the Internet. To search for the required kernel, you can use the LKDDb database, where all Linux kernel versions are indexed for supported device drivers, or search for a solution on forums and similar resources.

Today we are launching a new way to find drivers — by creating of hardware probes! If a driver was not loaded for some device in your computer probe, then the database engine will offer a suitable kernel version or known third-party drivers. The same information is presented on each PCI/USB device page in the database.

A probe can be created by the following command (there is an AppImage for other Linux distributions):

hw-probe -all -upload

The drivers search is carried out on the basis of LKDDb database for kernels from 2.6.24 to the newest 5.0 version. Also we have indexed the following third-party drivers:

- nvidia — NVIDIA graphics

- wl — Broadcom WiFi-cards

- fglrx — AMD/ATI graphics

- hsfmodem — modems

- sane — scanners

- foomatic — printers

- gutenprint — printers

- and about 100 drivers from Github for WiFi cards and other devices

Yes, we have indexed fglrx for old AMD/ATI graphics cards. In some cases, the performance of this driver was higher than the free radeon driver, but you need to install a previous version of your Linux distribution, because the fglrx driver is not supported by newest Linux kernels and Linux distributions.

This is not the only example when you need a rollback to an old kernel version. For some devices, the drivers become obsolete and are removed from the kernel. In such cases, you need to pick up one of the old versions of your Linux distribution with an appropriate old kernel, and then manually update necessary software if not provided in the repositories (e.g. browser).

Review of hardware probes

Did you manage to configure a hardware device that did not work out of the box? Did you find the right driver? The device does not work and you don't know what to do? Write a note about your experience right now in your hardware probe!

Registration is not needed — authorization of your computer is done while creating a probe. Just create a probe and immediately open it in your browser. You'll see a big green REVIEW button on the probe page for creating a review.

In the review, you can adjust the automatically detected operability status of a hardware device and write a comment for any device in the Markdown language.

Statuses and comments are assigned to the corresponding devices in the open database. Other users will be able to quickly learn from your experience if they have the same problem by creating probes of their computers and following the links to the database on the probe page.

One can create a probe by the command:

hw-probe -all -upload

Or by the Hardware Probe application in the SimpleWelcome start menu of the ROSA Fresh Linux distribution (KDE4 and Plasma editions). There is also portable AppImage, Docker, Snap and Flatpak to use on any other Linux distribution.

Hardware database for all Linux distributions

The Linux-Hardware.org database has been divided recently into a set of databases, one per each Linux distro. You can choose your favorite Linux distribution on the front page and hide probes and information collected from other Linux distributions.

Anyone can contribute to the database with the help of the hw-probe command:

hw-probe -all -upload

Hardware failures are highlighted in the collected logs (important SMART attributes, errors in dmesg and xorg.log, etc.). Also it's handy to search for particular hardware configurations in the community and review errors in logs to check operability of devices on board (for some devices this is done automatically by hw-probe — see statuses of devices in your probe).

Hardware stats and raw data are dumped to several Github repositories: https://github.com/linuxhw

Thanks to all for attention and new computer probes!

Checking devices operability

We've implemented automated operability checks for devices via analysis of collected system logs in probes. We check if the driver is loaded and used for each device in the probe and if the device performs basic functions. For network cards we check received packets, for graphics cards we check absence of critical errors in the Xorg log and dmesg, for drives we check S.M.A.R.T. test results, for monitors we check the EDID and for batteries we check the remaining capacity.

The operability status is detected for the following devices:

- Graphics cards

- WiFi cards

- Ethernet cards

- Bluetooth cards

- Modems

- Hard drives

- Monitors

- Batteries

- Smart card controllers

For the following devices we can only detect if the device is failed to operate:

- Sound cards

- Card readers

- Fingerprint readers

- TV cards

- DVB cards

You can check all your previous probes now — the statuses are already updated!

If you are a new user, then you can create a probe by the hw-probe command:

hw-probe -all -upload

Nonworking devices are collected in the Github repository: https://github.com/linuxhw/HWInfo

Thanks to all for attention and new computer probes!

EDID repository

The largest open repository of monitor characteristics has been created recently containing EDID structures for more than 9000 monitors: https://github.com/linuxhw/EDID

EDID (Extended Display Identification Data) is a metadata format for display devices to describe their capabilities to a video source. The data format is defined by a standard published by VESA. EDID data structure includes manufacturer name and serial number, product type, phosphor or filter type, timings supported by the display, display size, luminance data and (for digital displays only) pixel mapping data.

The most famous analogue of the repository is the EDID.tv project, which also contains quite a lot of information about monitors.

The repository is replenished automatically based on recent hardware probes. One can participate in the replenishment of the repository by executing of one simple command in the terminal:

hw-probe -all -upload

The hw-probe utility is pre-installed in the ROSA Linux distribution. Users of other systems may use AppImage, Docker-image, LiveCD or other techniques to create probes.

HW Probe 1.4

Friends, I'd like to introduce new hw-probe 1.4.

Most significant change in this release is the anonymization of probes on the client-side. Previously "private data" (like IPs, MACs, serials, hostname, username, etc.) was removed on the server-side. But now you do not have to worry how server will handle your "private data", since it's not uploaded at all. You can now upload probes from any computers and servers w/o the risk of security leak.

The update is available in repositories.

Other changes:

- Up to 3 times faster probing of hardware

- Collect SMART info from drives connected by USB

- Initial support for probing drives in MegaRAID

- Improved detection of LCD monitors and drives

- Collect info about MMC controllers

- Probe for mcelog and cpuid

- Etc.

You can, as before, create a probe of your computer via the application in SimpleWelcome menu or from the console by a simple command:

hw-probe -all -upload

Thanks to all for attention and new probes of computers!

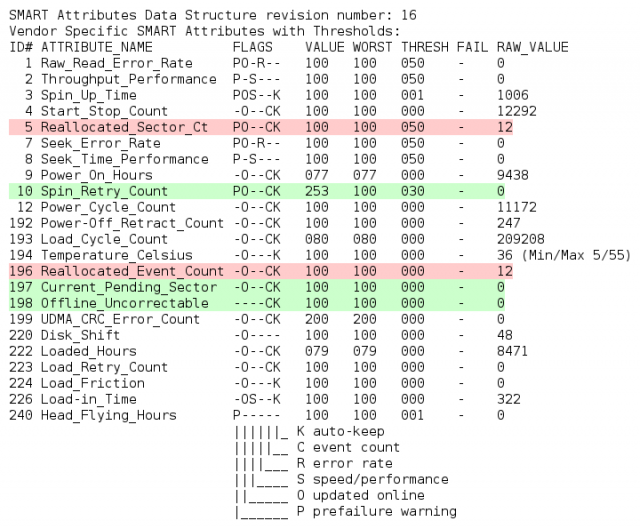

Highlighting important SMART attributes in probes

We've started to highlight most important SMART attributes in computer probes, that correlate with real mechanical failures according to Google and Backblaze studies.

Green highlights the zero value of important attributes, red — any positive value. You can review your probes now!

You can find links to the studies, as well as a complete list of highlighted important attributes here: https://github.com/linuxhw/SMART

You can, as before, create a probe of your computer via the application in SimpleWelcome menu or from the console by a simple command:

hw-probe -all -upload

Thank you all for the attention and new hardware probes!

List of devices with poor Linux-compatibility

A new project has been created to collect the list of computer hardware devices with poor Linux compatibility based on the Linux-Hardware.org data for 4 years: https://github.com/linuxhw/HWInfo

There are about 26 thousands of depersonalized hwinfo reports in the repository from computers in various configurations (different kernels, OS — mostly ROSA Fresh). The device is included into the list of poorly supported devices if there is at least one user probe in which the driver for this device was not found. The column 'Missed' indicates the percentage of such probes. If number of such probes is small, it means that the driver was already added in newer versions of the OS. In this case we show minimal version of the Linux kernel in which the driver was present.

Devices are divided into categories. For each category we calculate the ratio of poorly supported devices to the total number of devices tested in this category.

At the moment, the study is limited only to PCI and USB devices. In the future, it is planned to include the rest.

Please check the presence of known unsupported devices in the table. The device ID can be taken from the output of the 'lspci -vvnn' command in square brackets, for example [1002:9851].

Real-life reliability test for hard drives

A new open project has been created to estimate reliability of hard drives (HDD/SSD) in real-life conditions based on the SMART data collected in the Linux-Hardware.org database. The initial data (SMART reports), analysis methods and results are publicly shared in a new github repository: https://github.com/linuxhw/SMART. Everyone can contribute to the report by uploading probes of their computers by the hw-probe tool!

The primary aim of the project is to find drives with longest "power on hours" and minimal number of errors. We use the following formula as a measure of reliability: Power_On_Hours / (1 + Number_Of_Errors), i.e. time to the first error/between errors.

Please be careful when reading the results table. Pay attention not only to the rating, but also to the number of checked model samples. If rating is low, then look at the number of power-on days and number of errors occurred. New drive models will appear at the end of the rating and will move to the top in the case of long error-free operation.

You can, as before, create a probe of your computer via the application in SimpleWelcome menu or from the console by a simple command:

hw-probe -all -upload

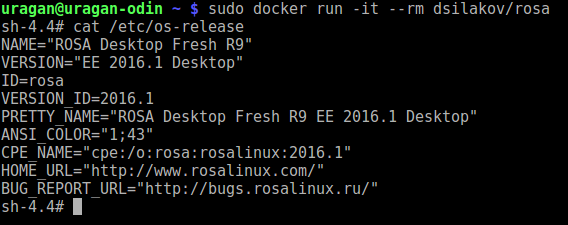

Docker Image for ROSA Desktop Fresh R9

We are opften asked where to get Docker image for ROSA Desktop Fresh. For example, such an image would be useful if you want to quickly debug build of some package and don't have a machine with ROSA installed near you. It doen't look sane to setup a Virtual Machine for a one-time task, while Docker container perfectly fits most of such needs.

For rosa2016.1 platform, Docker images are available here:

https://hub.docker.com/r/dsilakov/rosa/ https://hub.docker.com/r/sibsau/rosa/

If you need 2014.1 one, you can find it there:

https://hub.docker.com/r/fgagarin/rosa/

Feel free to launch docker container in an ordinary way:

- docker run -it --rm dsilakov/rosa

Files and scripts that can be used to create such images can be found in ABF: https://abf.io/soft/docker-brew-rosa

Pull requests and suggestions are welcome!

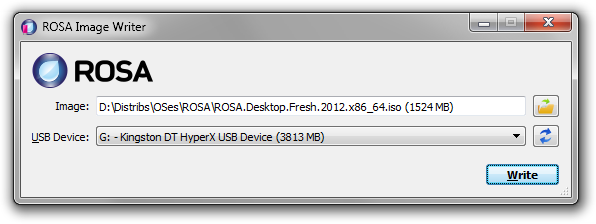

ROSA Image Writer

Optical drives are rapidly disappearing from our computers of all kinds, and consequently installing operating systems from USB flash disks is becoming increasingly popular. ISO images of the ROSA distribution were originally intended for burning to DVD disks, but they can as well be written to flash disks which would allow you to boot from them and launch the Live system or start installation. There is no standard tool for writing images to flash disks, everybody uses what he or she likes. In ROSA the command line tool dd was traditionally recommended for performing this kind of job. However, it can hardly be called user-friendly, and most users would feel at least some discomfort, if not terror, using it. For Windows users the situation is even worse. Granted, there is a dd port for Windows, but it happened to have serious bugs which prevent the resultant flash disks from working properly. All this led to the solution of developing our own tool, ROSA Image Writer.

The first version was based on the Windows version of SUSE Studio Image Writer, but its C# language (and, naturally, requirement for .NET Framework), usage of two completely unrelated projects in two different frameworks for Windows and Linux versions, and some other drawbacks made us write the new tool from scratch. Now ROSA Image Writer is developed in C++ with Qt5 framework and supports both Windows and Linux from the same codebase.

The list of main functions is:

- Selecting the image file via either usual Open File dialog, or by drag&dropping the file on the application window.

- The list of USB devices shows for each device its user-friendly name, size, and logical disks that originate from the device.

- When user inserts or removes a USB device, the list is refreshed automatically.

- When writing is in progress, the progressbar is displayed which is also translated onto the taskbar button in Windows 7/8.

- The application supports localization and includes the Russian translation.

Download links are available on the description page:

Helping ROSA Maintainers To Update Programs

One of the most frequent kind of ROSA users' requests is request to update some package in our repositories. Unfortunately, we can't satisfy all update requests immediately. However, every community member can easily help us with it, since with our current build infrastructure you can update packages even if you are not programmer or maintainer at all. In many cases basic knowledge of web browser is enough.

Assume that you have noticed a new release of your favorite application and want to update corresponding package in ROSA repositories. Consider for example a popular Atom code editor - right now ROSA repositories provide atom 1.2.0, but 1.3.2 was released several days ago. Let's try to update the ROSA package!

First of all, please register at our ABF build environment at http://abf.io. Registration is free, doesn't require any kind of confirmation and you will be able to log in into ABF just after registration is complete.

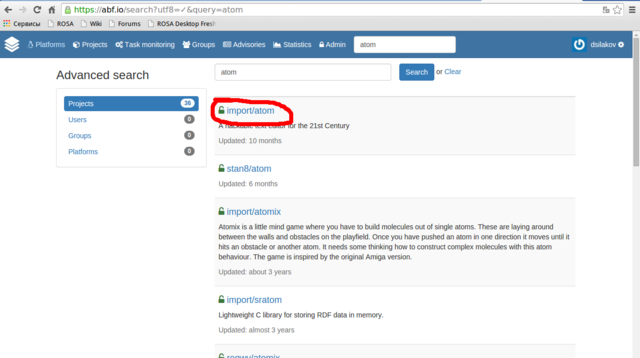

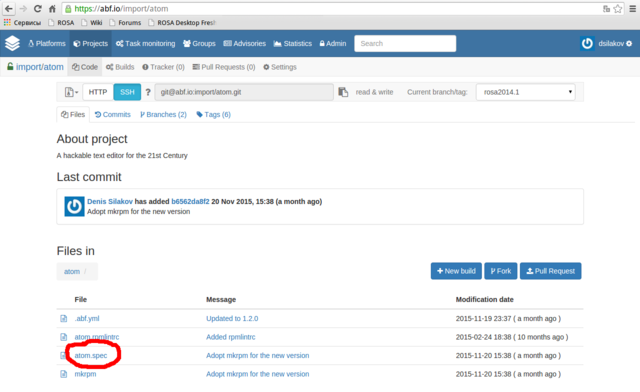

Then please find a project corresponding to your package - in our case we need atom project in the import group (always use the import group since it is contains projects built into our official repositories!).

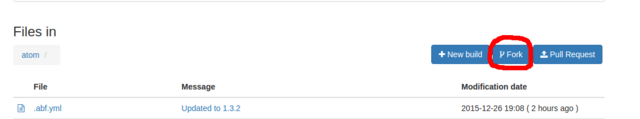

Go to the project found and press "Fork".

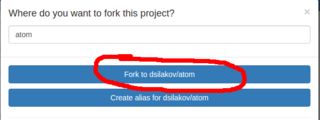

In the dialog appeared choose Fork to <your_login>/atom. This will fork the project into your personal namespace where you are free to modify it as you wish. The forking is fast and you will be immediately redirected to a page of the forked project. If you see a message that repository is empty then just reload the page.

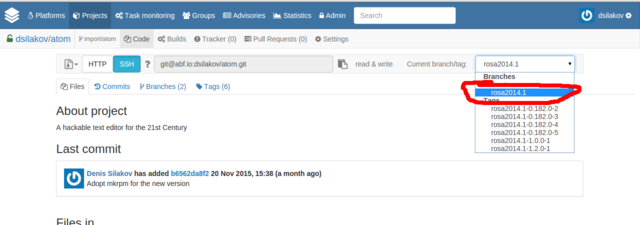

NExt step is to switch to the rosa2014.1 branch of the Git repository. If you doesn't understand what this means - don't worry, you just have to choose "rosa2014.1" in dropdown list at the upper-right corner of the page. We use "rosa2014.1" branch starting from ROSA Desktop Fresh R4 and will continue to use it at least in R7.

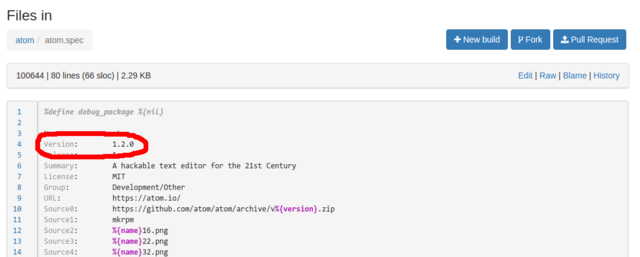

Now please find a file with the .spec extension among project files (atom.spec in our case), click on it and then click Edit to modify it.

All that you should do in this file is to update Version tag which is usually located near the top of the file. As we can see, currently this tag is set 1.2.0 and we will replace this value with 1.3.2. Please also write some valuable message (e.g., "Updated to version 1.3.2") in the "Commit message" text area. And that's all - save file by means of corresponding button at the bottom of the page.

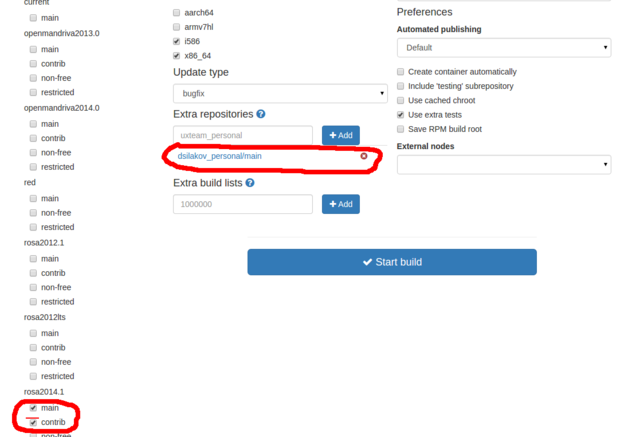

In the previous step we have prepared ABF to build a new version of our program. Now it is time to click on "New Build" and perform a couple of actions in the page appeared:

- detach your personal repository from the build by clicking on a cross sign near it

- attach Contrib repository by activating "contrib" checkbox item in the "rosa2014.1" section

Now press "Start Build" and wait for result. If the build fails - well, the easy-way update didn't work in this case and it is time to ask maintainers or improve your own skills and dig deeper into package build procedure. But if the build succeeds, you will be able to download packages with the new version of your application. Feel free to download and test them.

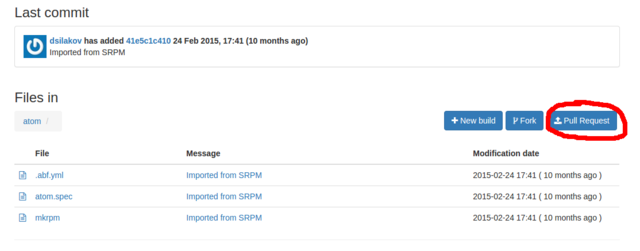

If the new version works fine, feel free to share this updates with the whole ROSA community. To do this, just press the "Pull Request" button at your project page.

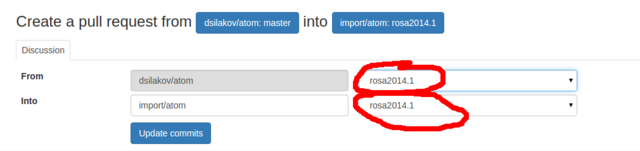

Make sure that you have specified "rosa2014.1" branch for both source and target projects, press "Update commits" button if it becomes visible, write some message in the "Description" field and click on "Send Pull Request".

ROSA maintainers will receive you request, review it and likely accept and build the updated package into official repositories.

ROSA Desktop Fresh R6 LXQt

After several months of ROSA Desktop Fresh R6 KDE release, we are happy to announce a lightweight edition of Desktop Fresh R6 which uses LXQt desktop environment.

Up to Desktop Fresh R5, we used to release lightweight editions on the basis of LXDE. However, LXDE is based on GTK+2 library stack which didn't get significant updates since the year 2011 - all new features are now implemented in the GTK+3 series. However, migration from GTK+2 to GTK+3 is not smooth and some developers are not happy with the ew stack. In particular, after experiments with GTK+3 many LXDE developers decided to consider Qt as an alternative and in the year 2013 they decided to merge with Razor-qt project and create a new Qt-based lightweight desktop environment named LXQt.

The old GTK+2-based LXDE is not dead and is still developed by a group of volunteers, but their progress is not as significant as LXQt's one. In particular, there was almost no significant difference in LXDE components between LXDE editions of ROSA Desktop Fresh R4 and Fresh R5. But our distributin has a "Fresh" word in its name, so we decided to give a chance to a new desktop environment. And after several months of experiments, integration work and bug fixes, we are ready to present a new edition of ROSA Desktop Fresh which is based on LXQt.

This release is based on LXQt 0.10.0 - the latest LXQt version at the moment. LXQt is built with Qt5 framework and the Desktop Fresh LXQt edition tends to include applications that also use Qt5, in particular:

- PCManFM-Qt file manager

- qterminal terminal emulator

- lximage-qt image viewer

- trojita mail client

- qpdfview PDF-viewer

- qmmp audio player

- juffed text editor

- qlipper clipboard manager

However, we couldn't find Qt5 programs for all possible use cases, so Desktop Fresh R6 LXQt still provides several Gtk applications. The most noticable ones are LibreOffice and Firefox - we decided that it would not be a good idea to provide semi-functional analogues even if they are based on Qt5 (though volunteers are welcome to test otter-browser from our repositories which is based on Qt5 and tries to mimic Opera 12.x GUI).

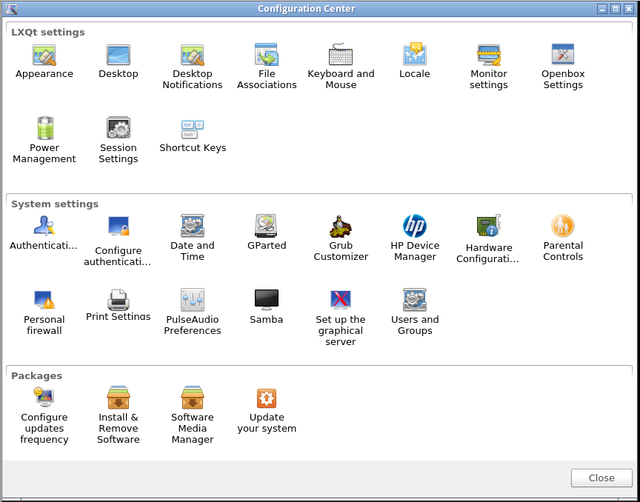

It is worth noting that LXQt has its own Control Center and ROSA-specific configuration tools such as "Install and Remove Programs" are smoothly integrated into it.

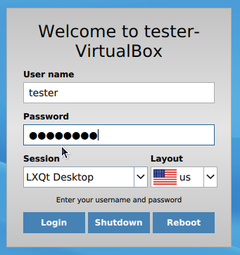

Desktop Fresh R6 LXQt uses SDDM desktop manager which is a recommended DM for Plasma 5, so it is likely that you will see it in futre KDE-based ROSA releases. Also note that this is the first ROSA release with a new default kernel - we have switched to 4.1 LTS branch.

Download

Minimal system requirements

- 256 Mb RAM (512 Mb is recommended fr installed system, 384 Mb - for the Live mode).

- 6 Gb HDD

- Pentium4/Celeron CPU

How to use USB device inside VM under Rosa Virtualisation (oVirt 3.5)

In our HOWTO we describe a task when you can use some USB device in VM under Rosa Virtualization (oVirt 3.5).

- You may want to use some HASP inside your VM for some reason.

- You may want to use other USB device, such as USB Flash drive for your VM, or use other USB storage device.

- You may want to use USB printer or USB cell modem inside your VM.

- You may need to use some other USB tokens, fingerprints devices, card readers, network cards, USB-to-RS232 devices, etc.

Or some more.... even iPod or iPhone :)

Before we start, I have to say about some important restrictions you need to know:

- Virtual machine which uses USB device will be running on the same host, where USB device has been attached.

- Your USB device must be attached to appropriate host system BEFORE you run VM, when you're plannig to use your USB device.

- If you use USB flash drive, or other USB storage device, and if storage device has been already mounted to host system in some mountpoint, a file system of the device will be unavailable for host system, after you mount your device inside your VM.

- You must edit VM properties to enable USB support (in Console options menu), we recommend to choose "Native" anyway. And you also must use SPICE protocol only to use USB devices, VNC protocol does not support any access to USB devices, attached to the host system.

- We did not check an ability to use any USB 3.0 devices. We do not have an appropriate hardware on our DCs.

In this example we use simple 8GB FAT32 USB flash drive, which is mounted on host system in /mnt

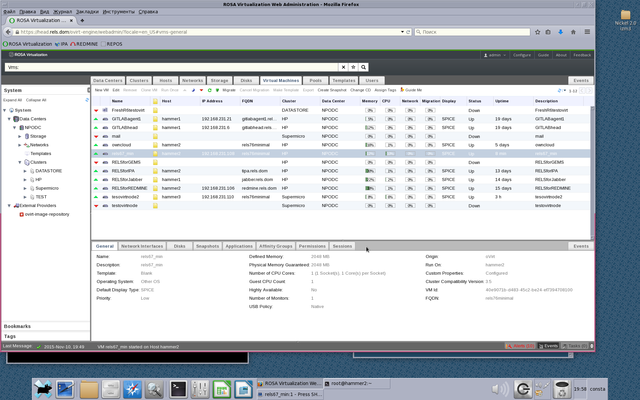

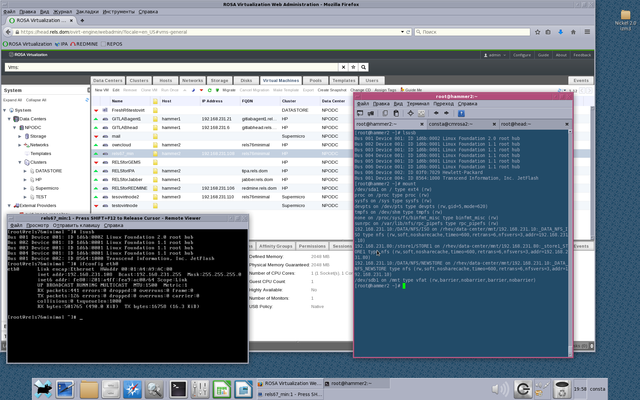

Our host system is named "hammer2" (See on Picture 2).

VM which we use the USB device for, is named rels67_min (ip — 192.168.231.108, FQDN — rels76minimal).

At last, our HOWTO (you must be root or use sudo to grant access):

- 1. Attach your USB device to your host system in DC (in our case we use "hammer2")

- 2. Install on your host system (in our case on "hammer2"), and install on your host running oVirt-engine (in our case we use host "head") the package vdsm-hook-hostusb:

yum install vdsm-hook-hostusb

- 3. On your oVirt-engine host run:

engine-config -s UserDefinedVMProperties='hostusb=[\w:&]+'

After your oVirt-engine will ask you to point appropriate oVirt version.

In our case we choose 3.5 (press 6 on keyboard).

- 4. Run on oVirt-engine host (on host "head"):

/etc/init.d/ovirt-engine restart

- 5. Run on host system (run on host "hammer2", where USB device has been attached):

/etc/init.d/vdsmd restart

- 6. Check USB device on host system:

lsusb

(if you can not run lsusb install usbutils package: yum install usbutils)

In our example we see our USB device as:

Bus 001 Device 004: ID 8564:1000 Transcend Information, Inc. JetFlash

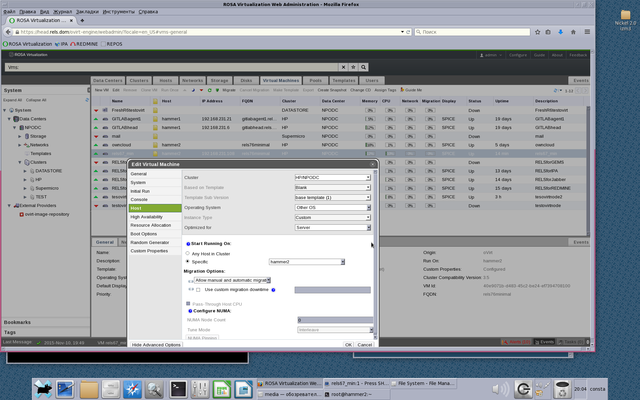

Look at Picture 1.

- 7. Open VM properties (right mouse click and choose Edit in menu). Virtual machine must be powered off, when you edit its properties.

Attach your VM to specific host (see on Picture 3, we choose host "hammer2"), where your USB device is.

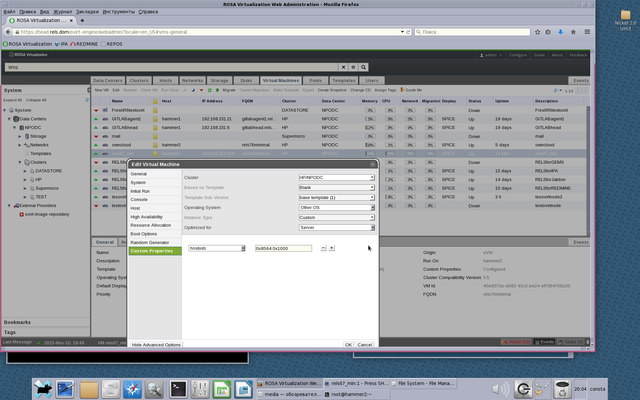

- 8. In Custom Properties menu a new option Please select a key appears.

Choose hostusb and write USB device ID, but do not forget to write prefix 0x. So in our case we write 0x8564:0x1000

See on Picture 4

- 9. Run your VM as usual, use SSH, VNC, SPICE, etc, to check USB device status:

lsusb

Look at Picture 1.

Now you can mount your USB device inside your VM.

Checking ROSA Desktop ISO Consistency By Means Of Checkisomd5

According to our not-so-secure information, in order to install ROSA most users download ISO images from our sites and then either install from this ISO directly or burn it to a USB flash or DVD disk (yes, it looks like DVD devices are still in use nowadays). Unfortunately, despite the common tendency of improving Internet speed and quality, it can still happen that something goes wrong during image download and user gets a broken file. Installation from such a file will be likely unsuccessful, so it is a good idea to preliminary check ISO file correctness.

A traditional way of verifying file content is to calculate its hash some and compare it to the expected value which is usually placed in a separate file (with .md5 or .sha1 or some other extension, depending on algorithm used to calculate the sum) near the ISO image. So besides the ISO file itself, you should download a file with its hash sum, calculate hash of the downloaded ISO (by running md5sum ROSA.iso) and compare the result with the expected one. Some programs will do some of these tasks for you automatically - e.g., K3b used to burn ISO images can automatically calculate hash sum of the image and compare it with the one provided in .md5 file (if the latter is found near the ISO file).

A less known way to verify ISO image content is to embed its hash sum into the file itself. The thing is that ISO9660 files contain an unused section whose size is large enough to store MD5 sum. To calculate MD5 sum of the ISO image content and embed it in the file itself, one can use implantisomd5 tool which is a part of isomd5sum package. To verify the image content (calculate its sum and compare it with the embedded value) one can use checkisomd5 tool from the same package.

All modern ROSA Desktop Fresh images contain MD5 sum embedded by means of implantsiomd5, so to verify downloaded ISO image you can simply launch

$ checkisomd5 ROSA.iso

This command has a couple of additional options: "--verbose" will provide you with additional inmformation about verification process, "--gauge" will display progress by means of numbers from 0 to 100 and "--md5sumonly" will force the tool to calculate and print hash sum of the ISO content, but not to compare it with embedded value.

You can launch checkisomd5 not only against ISO files, but also against block devices - for example, if you have a DVD with ROSA in your DVD drive, you can verify its content by means of checkisomd5 /dev/dvdrw.

Please not forget to verify downloaded images. This doesn't take long and can save you a lot of time in future if you try to install a system from a broken image.

Maintaining URLs In RPM Metadata

As you likely know, all programs in ROSA Linux are distributed as RPM packages that include application files themselves (binary executables, libraries, data, etc.) and so called metadata - package name, summary, description, requirements and so on. In this paper, we will speak about metadata fileds that can contain URLs to some external Internet resources.

First of all, this includes the URL field which points to a program home page (which you can see among other information in package manager). Besides that, many package spec files (that contain instructions for rpmbuild on building packages from the source code) specifies location of the source code in the Internet:

Source0: http://my-app.org/downloads/my-app-1.0.tar.gz

What is the need to specify URL here? I guess that the original reason was to simplify maintainers life and to help him find source code of a new program version in the Internet (sometimes it is not enough to know application home page, you can spend some time browsing that page and looking for the sources). Given the source URL, you can just replace my-app-1.0.tar.gz with my-app-2.0.tar.gz and any downloader will bring you a new upstream version. Long time ago you had to do this manually, but nowadays rpmbuild in ROSA Desktop can download files from Internet by itself, so all you should do to build a new version is to update Version field in the spec file (given that version macro is used instead of hardcoded value wherever appropriate).

One more ROSA tool utilizing URLs to source code archives is Updates Tracker. It analyzes source URLs from the spec files and decides where to look for a new upstream tarballs.

Thus, URLs in package metadata are quite useful and actively used. But as any other Internet resources, from time to time they can disappear or change their location. Package maintainers should detect such situations and update metadata correspondingly, otherwise users will go to dead pages instead of application home sites and Updates Tracker will look for new application versions in those places where these will versions will never appear.

For popular packages actively maintained by ROSA developers and community, the metadata is usually updated manually. However, we have quite a few package in Contrib repository which are updated rarely or used by so few people that no maintainer pay much attention to them. In addition, one should remember that manual metadata update can suffer from common issues which arise when human being performs some routine task - one can make a typo, use a wrong URL or just forget to update the data due to laziness or lack of time.

To solve this problem, it is necessary to automate routine tasks. URL monitoring is definitely a good candidate for automation. For RPM packaging and maintenance, we don't need complex Web crawlers and tracker, but instead we need a specialized tool that would analyze our spec files, find dead URLs and try to look for replacements.

This year we decided that development of such a tool is a good task for students of Russian Higher School of Economics during two week they spend in ROSA as a part of their practical work. As a result, we got two scripts - one to analyze spec files and detect broken URLs and another to find replacements for them.

The first one named find_dead_links acts in a straightforward way - it analyzes given set of spec files, extracts all URLs from them and checks availability of every resources. A set of potentially dead URLs is dumped as an output.

The second script named URLFixer analyzes output of find_dead_links and tries to find new location of every resource. Currently it can't detect new location of application home pages (though with current Web search technologies this doesn't seem to be impossible even for machines), but can successfully detect new location of source tarballs. As our investigations showed, most dead URLs appear due to trivial reasons - upstream developers can remove old tarballs from their site (in this case we have a good sign for package maintainers to update the package) or repack tarball to another format (e.g., in the latest years it became popular to switch to XZ compression). The tool checks if one of such cases happened with tarball under analysis and tries to determine the new URL if this is the case.

To be sure, these scripts are not complex at all (so it wasn't hard for a couple of students without Linux programming experience to develop them in two weeks), but when run against ROSA Desktop Fresh repositories these tools produced wonderful results. It turned out that 500 of our packages contained broken URLs and about 80% of them were quickly fixed with the help of URLFixer.

ROSA hardware DB — the largest in the world

A month ago we asked the community to actively make probes of their computers to replenish our hardware database in order to beat the market leaders, such as Ubuntu and openSUSE. A huge number of ROSA users responded and now we have the largest database of supported hardware in the world! We can say that it is the most technological hardware database in Linux, because it contains much more information about the devices and computers than other databases and it is replenished automatically by the hardware probe tool.

On August 25 2015, the size of the largest hardware databases is the following:

| Base | Size |

|---|---|

| ROSA | 1515 |

| Ubuntu | 1105 |

| openSUSE | 946 |

| h-node | 533 |

| Debian | 226 |

The ROSA hardware database includes 1515 tested computers, in almost one and a half times more than the database of the nearest competitor — Ubuntu. The database includes 768 mobile computers (notebooks and tablets), 728 desktop computers and 18 servers. The oldest tested computer is the Acer TravelMate 420 2002 model year with Pentium 4 (a8af42d6e7). The "biggest" one is the HP Superdome2 with 15 core Xeon E7-2890 and 2TB of RAM (9036136416). We have tested 1372 videocards, 332 WiFi cards and thousands of other devices.

The presence of such a large database of supported hardware allows to faster and systematically debug and fix problems with individual devices on users' computers, as well as choose to buy only tested models of computers and devices.

Database of multifunctional devices.

RaceHound 1.0 - Once More about the Races in the Kernel

Version 1.0 of RaceHound system has been released recently. RaceHound, as its name implies allows to detect the so called data races in the Linux kernel.

To put it simple, «data race» is a situation when two or more threads of execution (in an application, in the kernel, etc.) may access the same data in memory concurrently and at least one of the threads changes these data. Such conditions may lead to very unpleasant consequences but are often quite hard to detect.

The tools like KernelStrider may help reveal the races. They usually find a lot of potentials but may produce false alarms too. That is, they may report a potential race when a race is not possible.

On the other hand, RaceHound may miss something but if it has detected a race, the race does happen. By the way, these tools work together very well: KernelStrider finds potential races while RaceHound checks if these races really happen.

This was used not long ago to detect an interesting race in «uvcvideo» driver (webcam support) in the kernel 4.1-rc5. The developers of the driver, however, suggest that nothing bad should happen because of that race, but still.

The ideas implemented in RaceHound are rather simple.

- Place software breakpoints (similar to what the debuggers do) on the instructions that may be involved in the races.

- When a software breakpoint hits, determine the address and the size of the memory area the instruction is about to access.

- Place hardware breakpoints on that memory area to detect appropriate accesses to it.

- Make a delay. If some code tries to access to that memory area during the delay, the hardware breakpoints will trigger and the race will be revealed.

- Remove the hardware breakpoints and let the original instruction execute as usual.

As it is often the case, the devil is in the details. Implementing that algorithm was far from easy. It is no surprise that RaceHound is being developed since the summer of 2012.

Compared to the previous versions (0.x), our specialists overhauled the core components of RaceHound in version 1.0. It was only possible before to analyze one kernel module at a time, with additional restrictions. Now RaceHound is able to monitor the code from several modules and the kernel proper at the same time, any code a software breakpoint can be placed to.

Besides, during the development of RaceHound 1.0, an error in the implementation of the software breakpoints (so called, Kprobes, to be exact) was found and fixed in the kernel. The fix should appear in the kernel 4.1.

ROSA Enterprise Desktop X2

ROSA company is glad to announce ROSA Enterprise Desktop X2 — new version of operating system for enterprise use. The distribution includes software for all common tasks: office suite, Internet browser, E-mail and IM clients, audio and video viewers and editors and a lot of other programs. The distribution is based on ROSA Desktop Fresh KDE but provides additional stability guarantees — all updates except fixes for crucial bugs and security holes are published to RED repositories only after careful testing by QA team and users of ROSA Desktop Fresh.

If you would like to buy this distribution or get ISO image for internal tests, please contact ROSA sales department. See our site for details.